Symposium on

Geometry Processing 2019

Milan 8-10 July 2019

Graduate School

Following the tradition of SGP, the colocated Graduate School on Geometry Processing features several lectures in various topics in the field, held by world-class experts and lead researchers in the respective discipline.

The school is addressed to PhD students and other postgraduate students, with an interest in Geometry Processing. All partecipants to SGP 2019 have access to the SGP Graduate School 2019.

The school will be held the and in Milan, Italy.

Chairs

Marcel Campen · Osnabrück University

Sylvain Lefebvre

· INRIA Nancy

Program

| Mesh Generation | Maps between Shapes |

|

| Coffee break | ||

| Optimization in Geometry Processing |

Nets in Discrete Differential Geometry |

|

| Lunch | ||

| Spectral Geometry Processing |

Reliable and Efficient Mesh Processing |

|

| Coffee break | ||

| 3D Deep Learning | Topology Optimization for Fabrication |

|

Courses

-

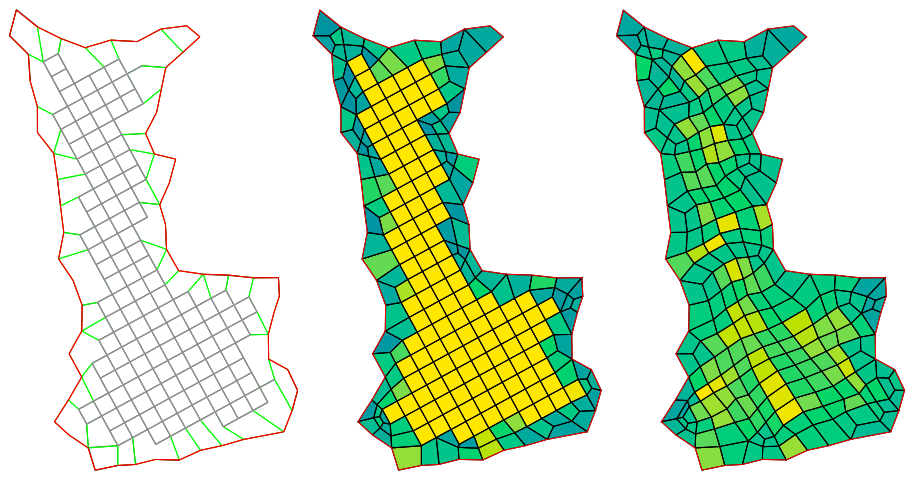

Mesh Generation

Nina Amenta · UC Davis (USA)

Discretization of surfaces or volumes into a mesh is often the first step towards rendering, simulation and further geometry processing. The quality of the mesh affects the the convergence and sometimes the results of these computations.

We will focus mostly on unstructured mesh generation and look a the gap between what can be established for triangle and tetrahedral meshes and what remains difficult for quad and hexahedral meshes, and formalize some of the open problems.

Course materials: [video]

-

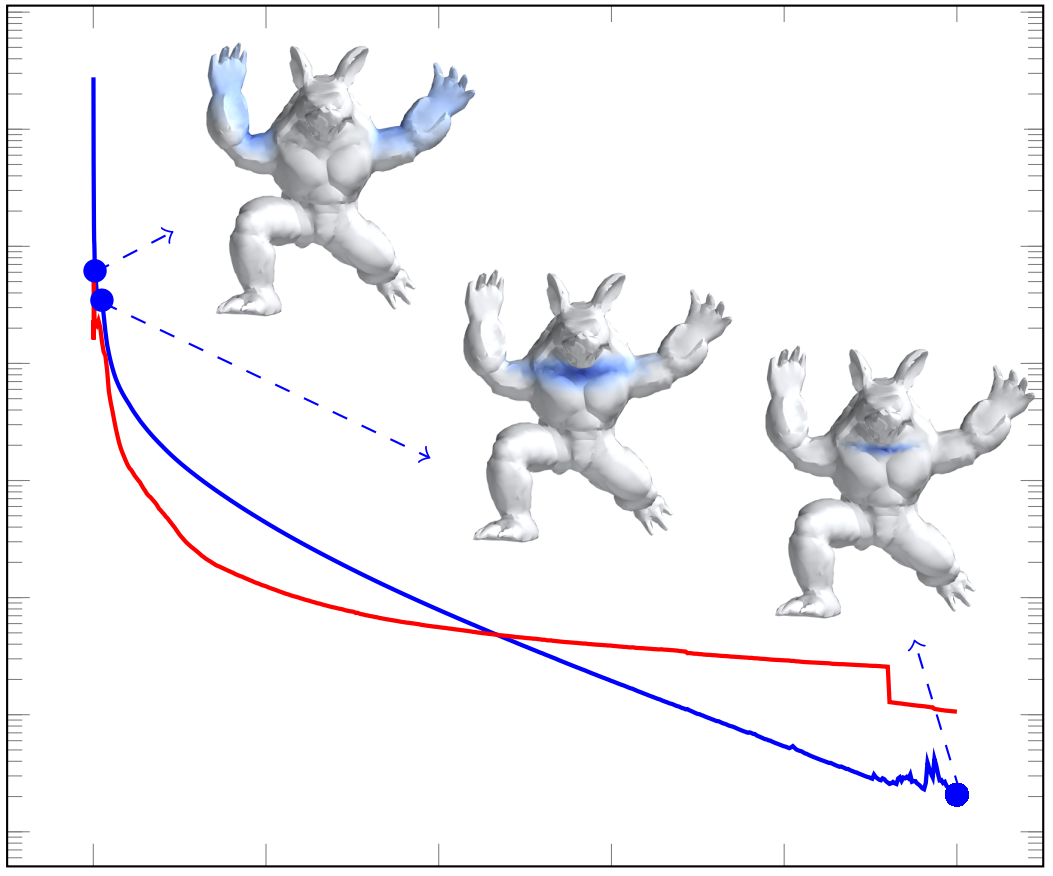

Optimization in Geometry Processing

Justin Solomon · MIT (USA)

Sebastian Claici · MIT (USA)

Countless techniques in geometry processing can be described variationally, that is, as the minimization or maximization of an objective function measuring shape properties. Algorithms for parameterization, mapping, quad meshing, alignment, smoothing, and other tasks can be expressed and solved in this powerful language.

With this motivation in mind, this course will summarize typical use cases for optimization in geometry processing. In particular, we will show how procedures for generating, editing, and comparing shapes can be expressed variationally and will summarize computational tools for solving the relevant optimization problems in practice. Along the way, we will highlight tools for prototypical problems in this class, including algorithms for unconstrained/constrained/convex optimization, lightweight schemes recently popularized in the machine learning literature, and procedures for relaxing discrete problems.

Course materials: [slides 1] · [slides 2] · [video]

-

Spectral Geometry Processing

Giuseppe Patanè · CNR-IMATI (Italy)

In geometry processing and shape analysis, several applications have been addressed through the properties of the spectral kernels and distances, such as commute-time, biharmonic, diffusion, and wave kernel distances. Spectral distances are easily defined through a filtering of the Laplacian eigenpairs and have been applied to shape segmentation and comparison with multi-scale and isometry-invariant signatures. In fact, they are intrinsic to the input shape, invariant to isometries, multi-scale, and robust to noise and tessellation.

In this context, this course is intended to provide a background on the properties, discretization, computation, and main applications of the Laplace-Beltrami operator, the associated differential equations (e.g., harmonic equation, Laplacian eigenproblem, diffusion and wave equations), the Laplacian spectral kernels and distances (e.g., commute-time, biharmonic, wave, diffusion distances). While previous work has been focused mainly on specific applications of the aforementioned topics on surface meshes, we propose a general approach that allows us to review the Laplacian kernels and distances on surfaces and volumes, and for any choice of the Laplacian weights.

All the reviewed numerical schemes for the computation of the Laplacian spectral kernels and distances are discussed in terms of robustness, approximation accuracy, and computational cost, thus supporting the audience in the selection of the most appropriate method with respect to shape representation, computational resources, and target applications

-

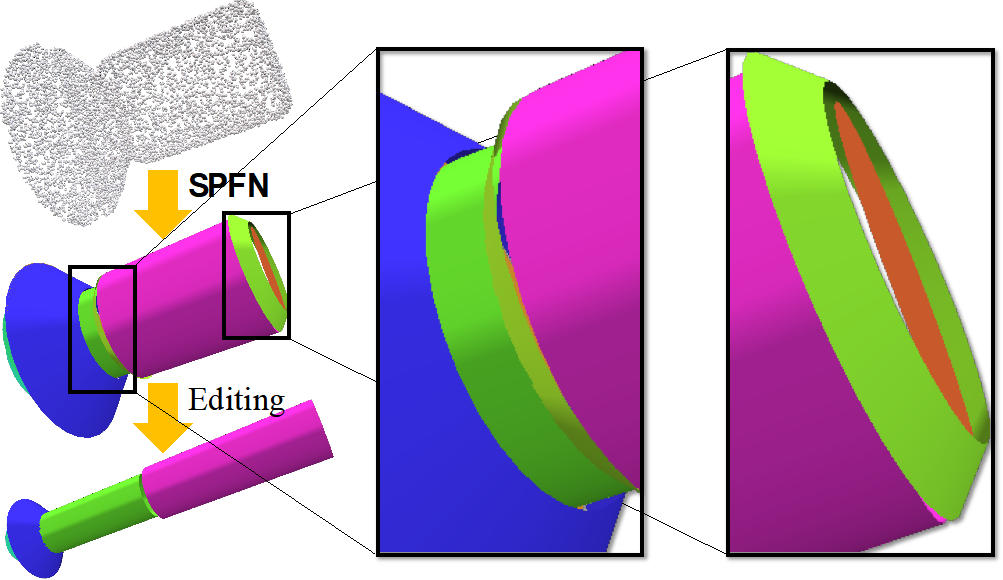

3D Deep Learning

Anastasia Dubrovina · Lyft, formerly Stanford Uni (USA)

Giorgos Bouritsas · Imperial College London (UK)

In the past decade, deep learning have emerged as a powerful technique for learning feature representations from large collection of examples, most notably, in computer vision and natural language processing. As opposed to images, 3D data comes in irregular formats, like point clouds and meshes, making the technology transfer from 2D to 3D far from straightforward.

In this course, we will present the foundations of 3D shape analysis using deep learning, including different types of 3D shape representations utilized for this task, and the corresponding deep learning architectures. We will further present the state-of-the-art deep learning techniques for shape classification, object recognition and retrieval, correspondence, synthesis and completion.

-

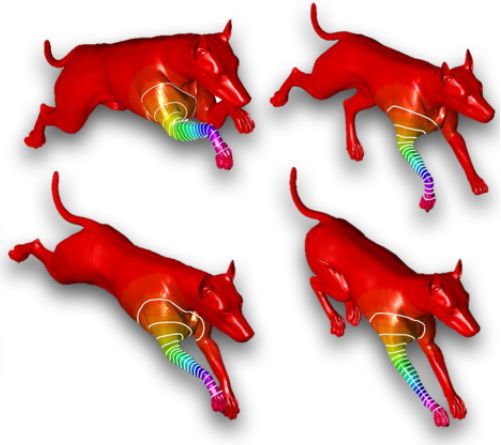

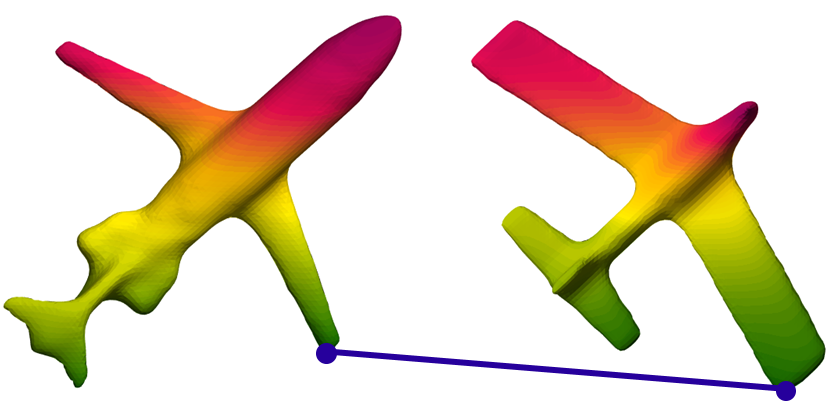

Maps between Shapes

Danielle Ezuz · Technion (Israel)

Many applications in Computer Graphics, such as deformation transfer and shape interpolation, involve joint analysis of different shapes. Such applications require a semantic correspondence between the shapes. We will discuss different methods for computation of shape correspondence, the main challenges, and the relation between shape correspondence and other geometry processing tasks.

-

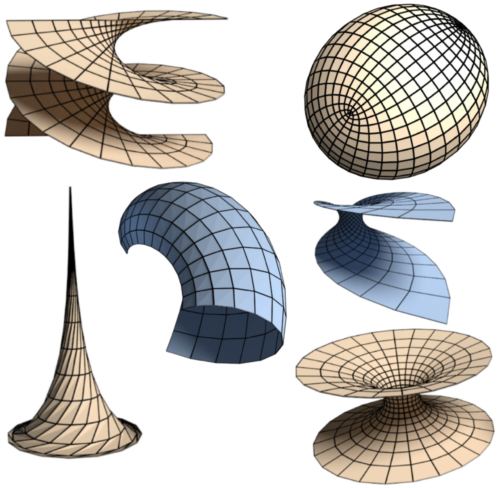

Nets in Discrete Differential Geometry

Michael Rabinovich · ETH Zurich (Switzerland)

Olga Sorkine-Hornung · ETH Zurich (Switzerland)

This mini-course offers an introduction to nets in discrete differential geometry. In this area of pure mathematics, nets are meshes with strongly regular quadrilateral grid connectivity, and they are considered as discrete analogs of smooth parameterized surfaces. Various types of constraints on the geometry of such nets can be used to define and characterize a variety of discrete classes of surfaces, such as discrete minimal surfaces, discrete developable surfaces and more. Contrary to the familiar polygonal meshes in geometry processing, the connectivity of nets is strictly fixed and encodes the parameterization of the surface in the discrete sense, with edge loops invariably representing the coordinate lines, such that the geometry of the net plays the central role. In general, discrete differential geometry strives to develop a discrete theory that respects fundamental aspects of the smooth one, following a simple motto: "Discretize the whole theory, not just the equations".

Throughout this course we will see how this approach pays off, leading to a rich theory that is also applicable to geometry processing. We describe how an intelligent choice of discretization, namely, constraints on the net geometry, results in discrete surface classes that maintain important structural properties of the smooth equivalents and are amendable to optimization. The course will be example based, following discretizations of conjugate nets, principle curvature nets, asymptotic lines and various discretizations of constant negative Gaussian curvature surfaces, minimal surfaces and developable surfaces. [image credits: link]

-

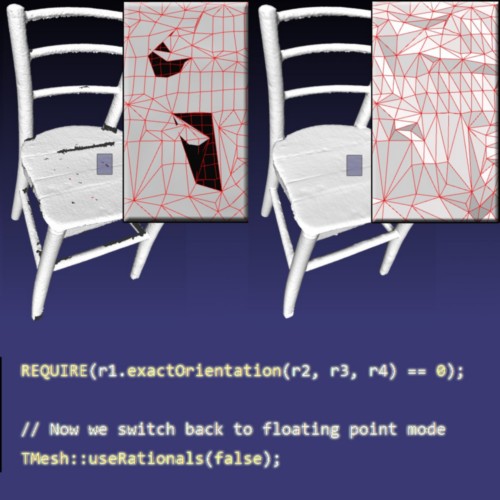

Reliable and Efficient Mesh Processing

Marco Attene · CNR-IMATI (Italy)

For researchers and practitioners dealing with geometry processing, turning a theoretical algorithm into a reliable computer program might become a tricky and sometimes frustrating process. Not rarely, developers overlook or ignore two fundamental facts: (1) real-world input meshes do not necessarily conform to the requirements of the algorithm; (2) theoretical guarantees might be spoiled by approximating real numbers with finite floating point representations.

This course explains how to deal with these two facts. We analyse the main potential pitfalls that prevent a geometric program to be reliable, and discuss existing tools and methods that support developers in this tricky task.

In the first part we focus on input models: defects and flaws in polygon meshes are categorized, and algorithms to turn these "bad" models into well-formed surfaces are discussed.

In the second part we consider the implementations: we review state-of-the-art methods to develop robust geometric predicates, to avoid round-off errors thanks to exact arithmetic representations, and to combine robustness with efficiency by exploiting geometric filtering, symbolic calculations, and hybrid kernels.

-

Topology Optimization for Fabrication

Jun Wu · TU Delft (Netherlands)

Advances in 3D printing enable the fabrication of structures with unprecedented geometric complexity. In design engineering, the benefits of this manufacturing flexibility are probably best exploited in combination with the design of structures by topology optimization. Based on a volumetric element-wise parametrization of the design space, topology optimization aims at finding the optimal material distribution for a performance measure (e.g., maximum stiffness), under a given set of constraints. Besides their highly optimized physical properties, the resultant shapes typically exhibit good visual qualities, which are appreciated in industrial design and architecture.

In the first part of this course, we review the basics of topology optimization, and density-based approaches for stiffness maximization in particular. In the second part, we focus on some recent developments that control the resultant geometric features for 3D printing. MatLab code is provided for both parts.

Local Scientific Committee

Exams for partecipating students who are candidates in Italian PhD programs swill be dealt with by the local scientific commettee.

Marco Tarini · Department of Computer Science · Univ. Milan (chair)Alberto Alzati · Department of Mathematics · Univ. Milan

Umberto Castellani · Università di Verona

Davide Gadia · Department of Computer Science · Univ. Milan

Daniela Giorgi · CNR-ISTI

Emanuele Rodolà · Università di Roma “La Sapienza”

Previous Editions

The previous editions of the SGP Graudate School can be found at http://school.geometryprocessing.org/